Unable to synchronize IMUs while streaming or while saving locally

Board information:

Using 4 MMR

Hardware revision [0.3]

Firmware revision [1.5.0]

Model Number [5]

Host Device Information:

Iphone 11

IOS 13.3.1

Using metabase app

In our use case of the IMUs it is crucial that we accurately synchronize the IMU data and compare time signals between them accurately. I know the tutorial recommends simply using the epoch times in the CSVs to correlate events, but in my experience that solution was not good enough. Instead I tried using calibration events, where both IMUs were subject to high acceleration from the same event in order to identify an offset to synchronize the elapsed times of the IMU. But when that still wasn't satisfactory I decided to do additional testing, attaching both IMUs to a hammer and making regular hits, finding and comparing the elapsed time when each IMU reported peak acceleration. For this testing I only had basic acceleration on, at 100 hz, both streaming and locally recording the data, finding the peaks of the vector sums of acceleration. From the other posts I have seen regarding synchronization it appears that non-sensor fusion metrics are recommended to reduce processor latency, and that recording locally is better, as on the order of 1% of data can be lost while streaming.

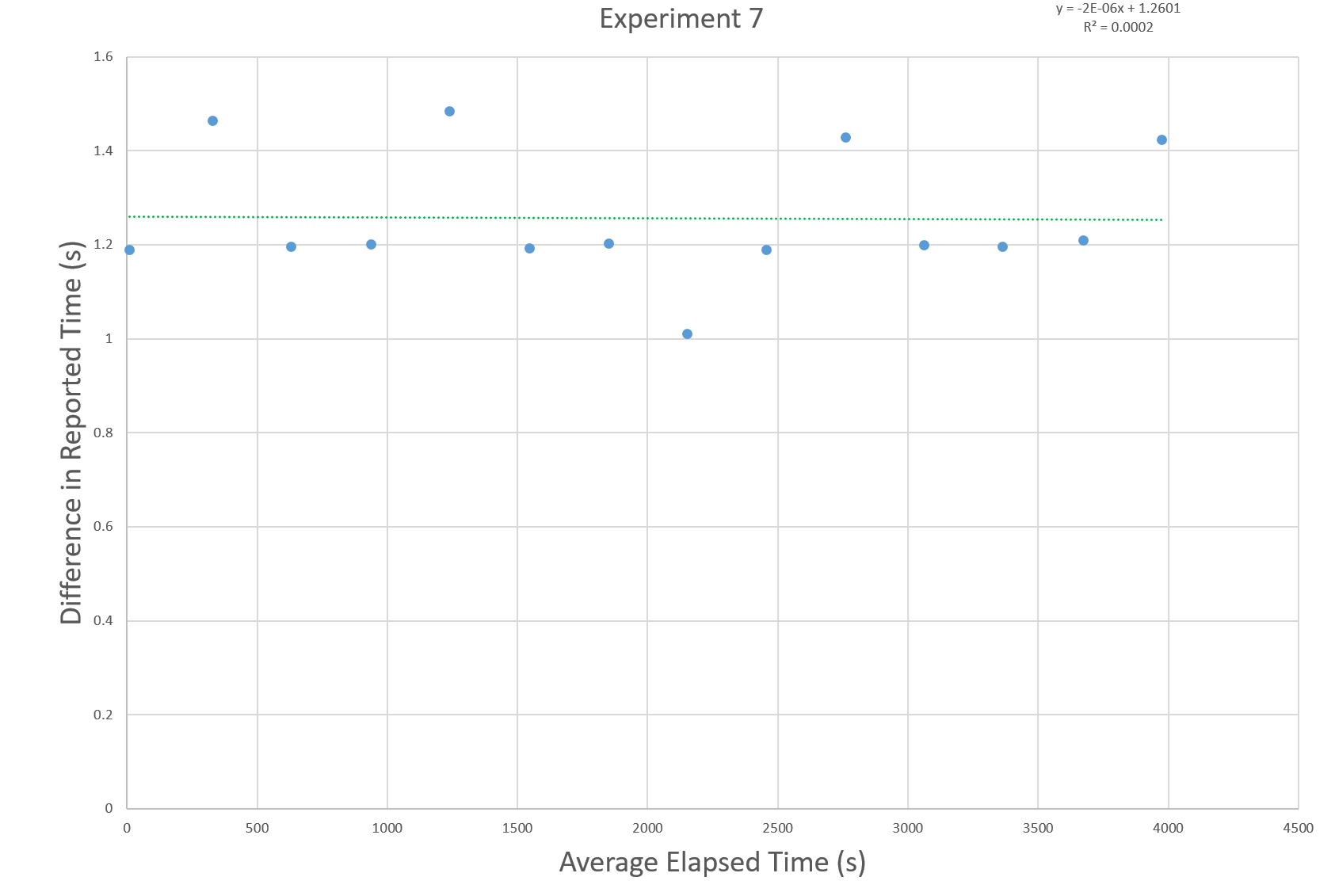

The results of one locally recording experiment are shown below, with the difference between the reported elapsed times at a peak in acceleration being plotted against the average elapsed time of the two sensors. Of course some of the offset is due to different starting times for each sensor that can be easily accounted for, but there is a variance of almost half a second between when the elapsed times are closest and farthest from each other.

This drift doesn't appear mathematically easy to account for, and matches the results of the other trials I did. If posting more trials would help, or the raw data from said trials just let me know.

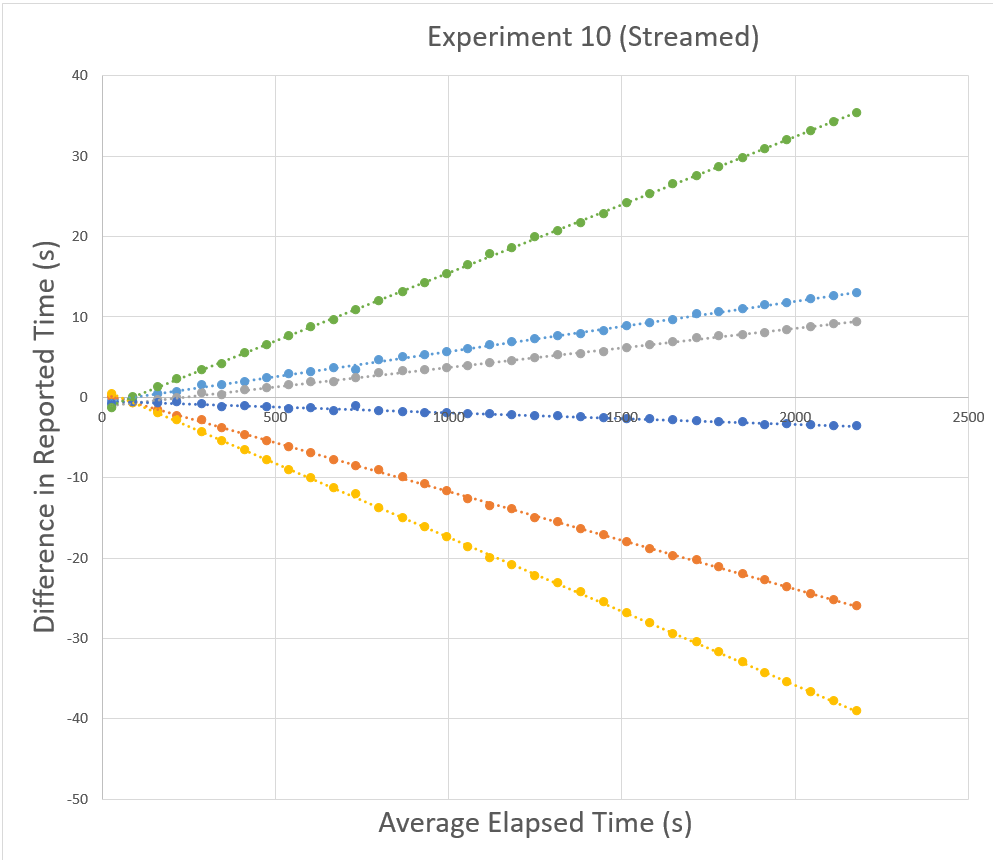

As for the streamed trials, the same calibration method shows a large linear offset combined with what seems to be the same variation present in the locally recorded data.

Though the linear curve appears to fit the data pretty well, the variance between the curve and actual data can be as high as half a second. Once more I am happy to post more trials or raw data from the streamed testing.

Overall is there something I am missing or are these sensors just not capable of temporal synchronization?

Comments

Are we talking about a difference of 0.2 seconds here? Which would amount to user error or bluetooth latency? Can you elaborate more with data?

You should know there will be human error and bluetooth latency in

That is not an acceptable experiment as a result. You could easily have up to 1s between events just from your human error.

Even saying: I pressed the start button at the same time on both sensors is not acceptable. That will result in a 0.5s potential delay...

Basically I don't think you are synchronizing correctly. Use the timestamps.

I apologize if it wasn't clear from my post but the data I present here is from experiments where the IMUs were attached to the same hammer as the source of a high acceleration event, precluding at least some of the sources of human error you suggest may have occurred.

To expand a little bit on the procedure, two photos of the sledgehammer with 4 IMUs on are attached. The general procedure was to allow the hammer to fall from a height of approximately 10 inches above the wood every 1 or 5 minutes depending on the test, though importance was placed on the precise timing when the hammer was allowed to fall.

Yes, this is much better and looks acceptable.

Now you should only have bluetooth latency to deal with and the minor latency when synching the local clock with the sensor clock.

Can you share some data between two sensors? What the time difference? Are you streaming or logging?

PS: Keep it to 2 sensors only for now to make the testing simple.

Sure, here is the raw data from experiment 7 which was logged on device, using the hammer setup shown above with 2 IMUs (I apologize for the xlxs format, for some reason I was not able to upload them as CSV).

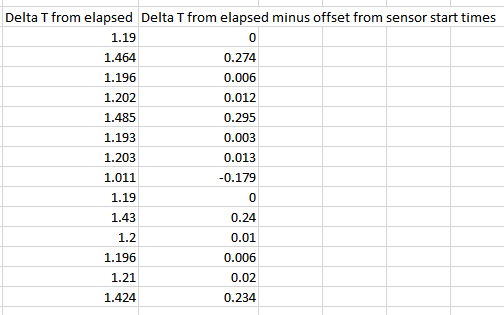

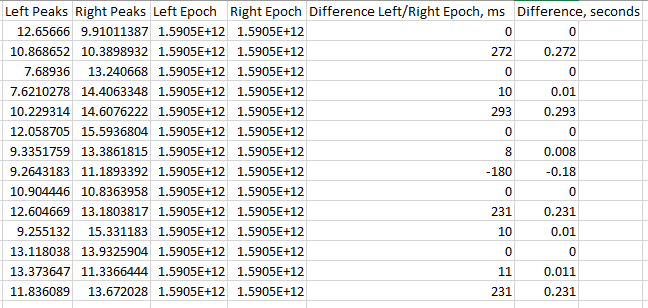

As for the processing I did, I found the local peaks of the vector sum of acceleration, noting the magnitude and the elapsed time. Then I found the difference in elapsed time and took the average of the two elapsed times at each peak for graphing, but the recorded elapsed times and differences between elapsed time for the two IMUs are found below.

Also, you mention bluetooth latency. Does bluetooth latency affect logged data? My understanding based on the other form posts I saw was that, when logging, the IMU only saves the time since it began recording, with the reference to a non-relative time only coming with the data transfer step by using the system time of the data download device. Since I am using logging and only looking at the elapsed time reported by the IMU, the transfer process shouldn't effect the data?

Looks like there's nothing wrong with this because they are independent sensors.

Each sensor records data at their own rate due to sensor latency and internal clock settings. We are talking about micro seconds here but it's enough for you to see in the timestamps.

Each sensor is individual and will never start at the exact same micro second in time as the other sensors (that's just not possible).

When you download the data, there is further latency from the bluetooth and the clock sync between the sensor and computer. Again, we are talking milliseconds here which don't impact you at all.

What you need to do is to "intelligently" combine the two datasets. Don't use elapsed time, use the epoch and merge items that are close together (even if it means adding or deleting entries).

PS: Welcome to real world data (and not textbook data)

I understand that the sensors start recording at different times, and a more fair way to present the difference in elapsed time from before would be this, as most of the initial 1.19 second difference is from different start times.

But the difference here is not micro or milliseconds as you allude to but substantial fractions of a second, with the difference in elapsed time change between the first and the second data point being 275 ms. In a 5 minute period one IMU measured 275 more ms passing than the other. But this isn't a consistent behavior, as sometimes the difference between the sensors in a 5 minute period is almost zero, and is thus impossible to linearly account for. A calibration based on the first two events is not applicable between the second and third events, etc.

Does the download process change the reported elapsed time from a given measurement when logging locally? I understand epoch and the time stamp may be affected, but if I'm comparing relative elapsed times I don't understand how the download process should change the data. Do IMUs logging data communicate with the device except at the beginning or ending of testing?

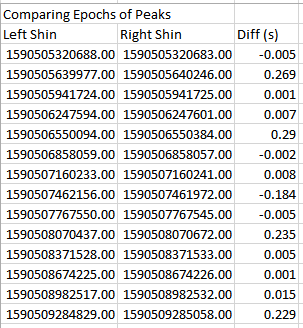

Epoch is simply elapsed+an offset based on when the sensor started recording, no? Comparing the epochs of the peaks gives almost identical results to comparing elapsed times and then subtracting the initial offset.

If I just assume the epochs are correct, then events that actually happened at the same time may appear as far as 0.3 seconds apart.

I understand this is real world data, which is why I've been putting effort into validation and trying to find a way to account for the error in timekeeping by the IMUs. Unfortunately the error seems transient and not easily fixed by finding any sort of linear difference between the timekeeping practice of each IMU. If random errors of 0.3 seconds are what I should expect when comparing the timestamps between IMUs then I understand, I just wanted to confirm that my results accurately reflect the ability of the IMUs to keep track of time. Our results suggest that even calibration events every minute would not be enough to match up times between IMUs. It seems the most likely source of error would be a difference in the speed of the clocks on each IMU leading to a linearly growing difference in reported times that could be easily accounted for. Instead this is not the case and the source of error seems to be somewhere else.

Again this all looks normal to me. You simply need to intelligently synchronize the sensor data in the real world.

Could you at least expand a little bit on what you mean by "intelligently synchronize"?

Use the epochs to "match" closest value between sensors, even if you have to delete or repeat entries. I would write a script to do this for me.

Assuming I understand your method, it is essentially a more advanced version of what is suggested in the topology tutorial, where data is aligned between IMUs based on the epoch alone. Above I stated that "If I just assume the epochs are correct, then events that actually happened at the same time may appear as far as 0.3 seconds apart." I was using events to align the relative times and you suggest using epochs to align the events which functionally should be the same.

Just for posterity, I went through and lined up all the data points for both IMU's in a single file, matching values with the closest epochs between sensors. The results, from finding the peaks for each sensor in this new file with "aligned" epoch had nearly identical results as before.

The clocks are not right. They do not record the same time transpiring as each other even over relatively short time spans. Using the epoch or the elapsed doesn't change the result because they are the same fundamental measurement.

I didn't say line them up 1 to 1. I said line them up intelligently. It may involve duplicating or removing entries.

I didn't word it well in my previous comment but that is indeed what I did. Using the Left IMU as the reference, because it had less data points, I took each Left IMU epoch and found the epoch from the right sensor that was closest to it. Then I lined up the left IMU data and right IMU data all in one row with the left IMU timestamp. I implemented it in a way where entries could be duplicated or ignored completely.

However, even if I didn't follow the "intelligent" synchronization correctly, how does assuming the epochs are correct solve my problem? The epoch comes directly from the elapsed time, and my biggest concern with the data is that in between the first hit and the second hit, a 5 minute period, the two IMU's differed in their measurement of the time that had passed by almost 0.3 seconds. It shouldn't matter what timestamp I look at, because the fundamental issue is a difference in the time that passes between events, a difference that cannot be explained with a single offset or as a linear function of time.

0.3s in 5min = 0.1% clock drift.

@Laura: would that be within specs (it may be)?

That sounds fair.