Sensor Fusion Mode

I am currently streaming data from the MetaMotionR sensor in fusion mode (NDOF). I was reading the the tutorial and say that the accelerometer, gyroscope will operate at 100 Hz, and the magnetometer at 25 Hz.

My question is regarding streaming sensor data frequencies. I need to get the euler angles, accelerometer xyz, and gyroscope xyz.

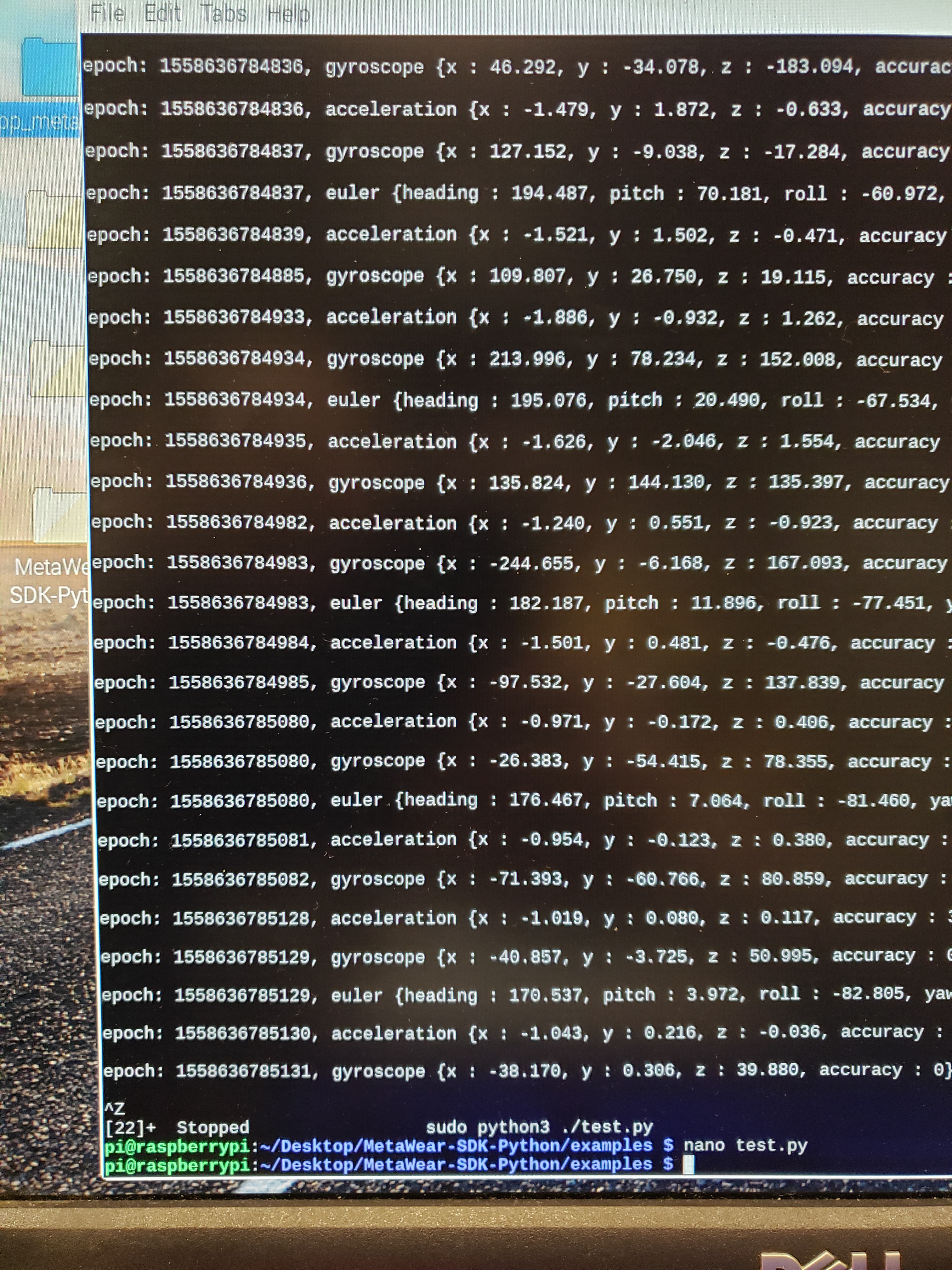

When streaming SensorFusionData.CORRECTED_GYRO, SensorFusionData.CORRECTED_ACC, and SensorFusionData.EULER_ANGLES, have weird jumps in the epochs. See uploaded image.

I see jumps of roughly 47ms and sometimes almost 100ms. Also, there seems to be some samples that are 2 ms apart.

Is this something to do with limitations of streaming them all? And if so, is there a way to package the fusion data to be able to stream at a higher frequency?

Comments

Maybe not stream at a higher frequency, but get the data at regular intervals, so that I can get close to real-time as possible?

You can stream euler OR acc and gyro, not both. The bluetooth link can't stream all of this at the same time so packets are dropping.

Basically bluetoothLE allows for about 100Hz live throughput (data sampling rates) and that's the default rate for sensor fusion. Once you add more sensors like the accelerometer, the bluetooth link can't handle it and will start dropping packets.

You have to downsample the sensor fusion using our APIs or use data fuser mode to push 120Hz (that will still only give you acc + euler at most).

Please see our tutorials for more info.

Just to test only the euler angles, I still am getting jumps similar to the image I posted before. The simple code I am using is below.

I am not sure why I still get jumps in the epoch, and also tried getting the euler angles using windows metabase app, and the epochs jump a maximum of 25ms. I am keeping the sensor within 5m with no obstruction for both this python example and the metabase app. Does the metabase app use NDOF mode for euler?

Tune the connection interval to support high frequency streaming:

https://github.com/mbientlab/MetaWear-SDK-Python/blob/master/examples/stream_acc.py#L33#L34

Use an accounter (count mode) to ensure you are not losing any packets.

https://mbientlab.com/cppdocs/latest/dataprocessor.html#accounter

Yes

>

Thank you, that is what the problem was and the accounter verifies it as well.

I have a follow-up question regarding streaming and logging. I was trying to find a similar question in the forums, Eric mentioned in a post by "fyjs".

1) Why isn't it recommended the board be used in the way?

2) Is there any issues with detecting all euler angles when the sensor is attached to the outside of the foot? I seem to not be able to capture any yaw data (which would be rotating it along the x axis according to the calibration orentation).

1) A better question is why do you want to log and stream at the same time? There is no benefit at all, it uses up computing time for no added benefit.

2) No, we have created apps without this issue when the sensor was placed on all parts of the body. It takes mathematical and developer expertise to do it correctly: www.miotherapy.com (our sister company)

I'm sorry, I may not have been specific when asking the first question. I am wanting to stream euler angles, but log the accelerometer and gyroscope data. I want to view the streaming results, but also log data on the other accelerometer and gyroscope.

To the second question,

If I cant do the first one, math can be used to get the pitch and roll from the accelerometer data, but wouldn't that just be an estimation? Would I need to apply low-pass filtering to it to prevent fluctuations? And then with yaw, I would need data from the accelerometer and magnetometer?

Sorry for the extra questions, I want to be sure I am on the right path to figuring out how to obtain all the data.

You can definitely do that!

You can try to see what you can push using the fuser module too (see our tutorials for more info).

Thank you for you help, it's greatly appreciated!

I have another issue that I've been looking at this morning. The yaw data, as well as pitch are fine, and display the expected repetition of walking on a treadmill. However, the roll data has interesting discontinuities that only happen at a certain instant. I understand why it does the flip from 180 to -180 and that's fine.

During walking, when the foot reaches a point where it is almost vertical (heel at highest point), there is a jump in the received sensor data. It operates as expected otherwise. Attached is a zoomed image of a portion of the output plotted in matlab. The metabase app and python script both show this issue. The problem is where it jumps from near -180 degrees to almost 0, then back.

I can get rid of it by changing so the reference of the roll is at zero instead, and getting rid of the discontinuity using threshold when doing a forward difference, I just wanted to understand the nature of what is happening with the sensor.

I am not sure exactly other than I would guess that the way the sensor is placed on the foot you are right on some axis threshold (hovering around where the axis flips from 1 to -1 in its orientation). This can be fixed by changing the sensor placement on the foot or by doing some "smart processing" as you mentioned.

When we do this sort of analysis we map the sensor data a video element to see and compare the data to what the sensor is "doing" (how it is moving at that instant in time).

Can you take video of the foot and match it with the sensor data?

PS: This is the way professionals do it.

Hi All

I've used the above script to get the Euler angles from the sensor with ease. I would further like to know how i can save the angles on a text file in python.

I've tried several ways to determine how i can extract the data and save it but i don't understand how the angles are wrapped up in the lambda function.

If you could kindly assist on a possible way forward that would be greatly appreciated.

Thanks in advance.

Please note it is your responsibility to learn Python. This is not a question related to our sensors but rather on how to use and code Python.

Hi Laura thanks you for your reply,

i was not saying something is wrong with the sensors, how ever i was wondering if someone has managed to do a similar application. I've searched for a number of ways to figure it out and haven't ran into a solution yet.

Unfortunately you are asking a general Python coding question, not a sensor or metamotion question so this is not the appropriate forum.

Just do a few python dev tutorials online, get experience with Python scripting/coding, get more comfortable with the language and then you can start using our APIs.

Our APIs require that you be a confident developer.

Thanks

hi

is it possible to get only part of the euler ?

for example

SensorFusionData.EULER_ANGLE("heading") or SensorFusionData.EULER_ANGLE.heading

the second one worked for me!